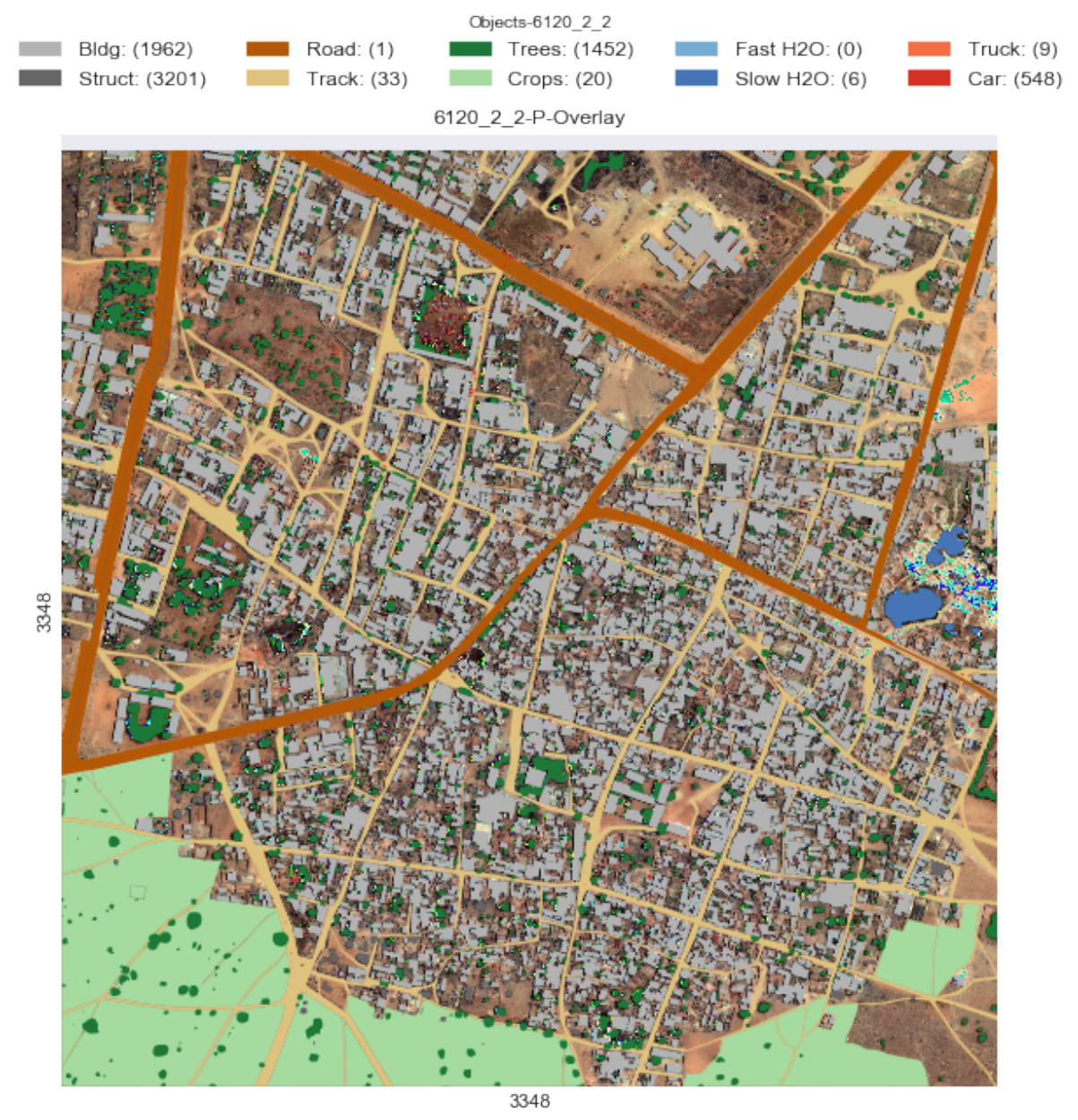

I recently worked on an interesting project using deep learning for semantic segmentation of multispectral satellite images. The goal was to accurately categorize each pixel into one of 10 different classes like buildings, roads, trees, water bodies, vehicles etc. This allows us to create detailed maps from the imagery. The dataset came from the DSTL Satellite Imagery Feature Detection challenge on Kaggle.

Since this is a kaggle competition, its worth adding references/inspiration before the main text.

- I was inspired by this kernel

- and this kernel for the U-Net architecture.

- As well as this medium post for walking me through concepts.

- And a leaderboard topping approach

Dealing with Sparse Satellite Data

One of the first challenges was that the training dataset only consisted of 25 labeled satellite images covering various land areas. With deep learning models being notoriously data hungry, I was worried these 25 images might be too sparse to properly train a model. Each image was also captured in 20 different spectral bands at varying resolutions making them densely packed with color information - while great for classification, hard to directly feed to a convolutional neural network.

To overcome the limited data, I explored techniques like pansharpening to merge high resolution panchromatic images with lower resolution multispectral bands. This increased the level of detail available for training. I also calculated various vegetation and water indices using different spectral bands to quickly classify landscape regions into vegetation and water bodies. This simplified the problem space for the main semantic segmentation model.

Training a U-Net Architecture

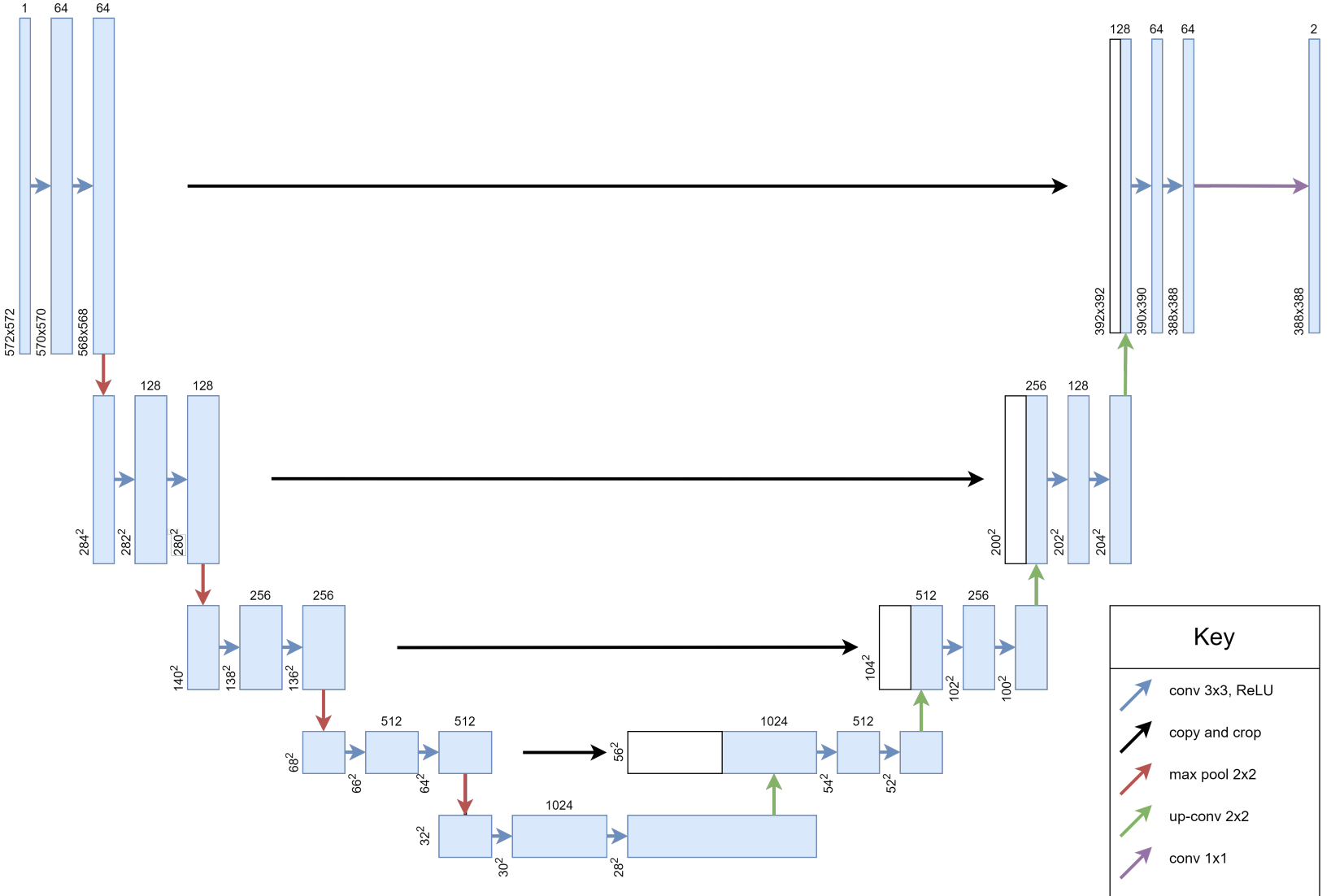

For semantic segmentation, I decided to use a U-Net architecture. Originally built for biomedical image segmentation, U-Nets work well even with small datasets by supplementing a typical convolutional neural network with successive upsampling layers. This allows combining high resolution features from early layers with upsampled output to precisely segment images.

I trained the U-Net for 50 epochs using the Adam optimizer, binary cross entropy loss and a Jaccard similarity coefficient that evaluates segmentation accuracy. Extensive data augmentation was done by randomly cropping patches from the 25 images and applying transformations.

Promising Results on Satellite Segmentation

The results were quite positive, with a score of 0.40 on the competition leaderboard. The model performed especially well in detecting buildings, crops and waterways thanks to the preprocessed indices. Performance for vehicles was weaker, likely due to insufficient training examples.

Overall this project demonstrates that with the right techniques, deep learning can unlock detailed semantic maps from even sparse multispectral satellite data. By combining U-Nets with spectral preprocessing, I have a framework that can generalized to segmentation tasks on other satellite datasets. My next steps are to expand the training data and experiment with extracting individual objects. Exciting times ahead!

To see more details, check out the paper.

Further Reading: Fully Convolutional Networks for Semantic Segmentation U-Net: Convolutional Networks for Biomedical Image Segmentation

Disclaimer: This project was completed as part of my MSc in Data Science Lancaster University. This blog post is an LLM generated text, based upon the hand-written report.